This is the start of a series of posts on Wikipedia, DBPedia and data mining.

I have tried, like many others, to analyse the free text and the metadata in Wikipedia, and like some, I have tested different approaches for getting the optimal weight of different metadata collections for relevance of different textual data and for validation of text mining.

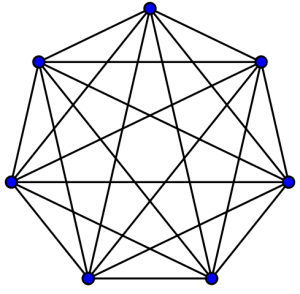

One interesting item I discussed once was how the formal analysis of graph structures of metadata can help us in the validation and weighing of textual and semi-textual data. By semi-textual data I mean above all Wikipedia categories.

It turns out that if you let a robot use translation links of Wikipedia articles to produce graphs and analyse whether those graphs are complete you can detect to some extent how mappable the Wikipedia categories of those entries are and how problematic they can be for generating ontologies or proto-ontologies.

For some time now, though, Wikipedia has introduced a system whereby translation links are managed at a central place and all-to-all translations are being almost forced upon. But we can also use Wikipedia categories and the graphs produced by linking parent and sibling categories and category translations. This is a more productive area. I will go into this in the following post with some examples of mining German, Chinese and English Wikipedia projects.